Merge the preprocess step into the easydeploy onnx/tensorrt model #742

Add this suggestion to a batch that can be applied as a single commit.

This suggestion is invalid because no changes were made to the code.

Suggestions cannot be applied while the pull request is closed.

Suggestions cannot be applied while viewing a subset of changes.

Only one suggestion per line can be applied in a batch.

Add this suggestion to a batch that can be applied as a single commit.

Applying suggestions on deleted lines is not supported.

You must change the existing code in this line in order to create a valid suggestion.

Outdated suggestions cannot be applied.

This suggestion has been applied or marked resolved.

Suggestions cannot be applied from pending reviews.

Suggestions cannot be applied on multi-line comments.

Suggestions cannot be applied while the pull request is queued to merge.

Suggestion cannot be applied right now. Please check back later.

Thanks for your contribution and we appreciate it a lot. The following instructions would make your pull request more healthy and more easily get feedback. If you do not understand some items, don't worry, just make the pull request and seek help from maintainers.

Motivation

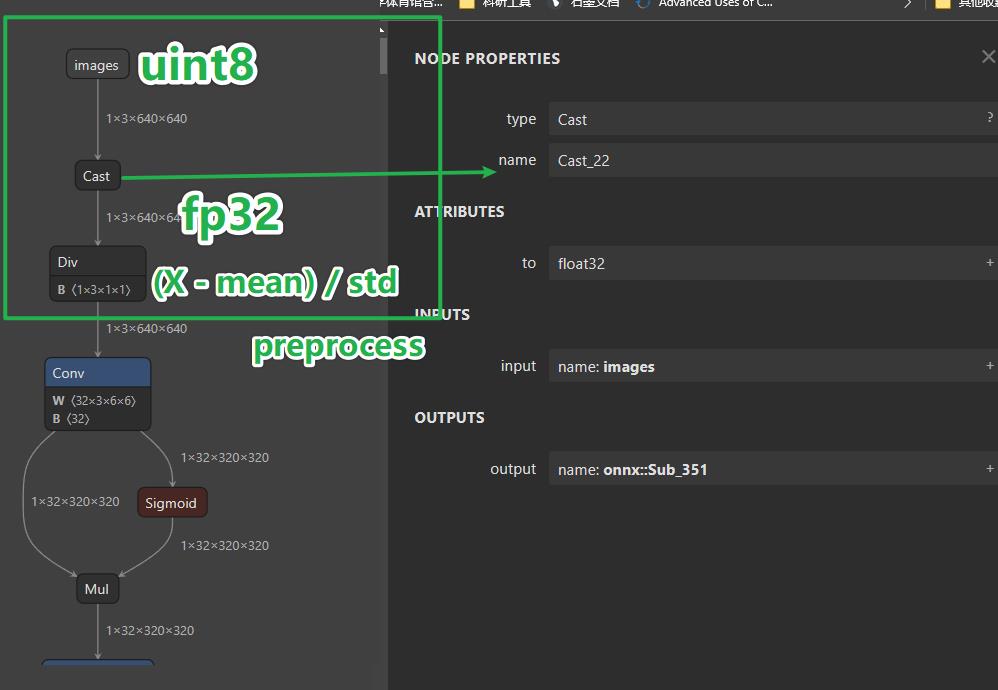

Embed the preprocess operation (image_rgb - mean / std ) into the onnx/tensorrt model. So that 1. no need to repeat this preprocess in many inference tasks. 2. The image_rgb can directly fit into the onnx/tensorrt model.

将 RGB normalization 的这一步操作也合并到onnx模型中,1. 在下游图片、视频处理任务中不用再重复preprocess 操作。导出后也不再依赖 config 文件里的mean、std参数。可以减少数据预处理的计算负载。2. 可以直接将 image rgb 的 uint8 数据 (NCHW) 扔给ONNX模型,更方便部署。不影响精度。

Modification

Export the

class PreProcess(torch.nn.Module)into the deploying model.Main change.

Fit the image_rgb data into the model

The test code below. It didn't change the API at all.

Inspect the ONNX model. You see the model allow rgb uint8 inputs.

Inspect the onnx model.

The result compare with original version.

Checklist